jTab 1.1: Guitar tab for the web gets an update and a mailing list

I announced jTab back in July, and there have been

some nice improvements over the past month which I just tagged as a "1.1" release.

jTab is a javascript-based library that allows you to easily render arbitrary guitar chord and tabulature (tab)

notation on the web. Automatically. It is open source (available

on github).

I've also established a mailing list for jTab. All are welcome to

join in to discuss internal development issues, usage, and ideas for enhancement.

Some of the key new features:

-

- All chords can be represented in any position on the fretboard e.g. Cm7 Cm7:3 Cm7:6

-

- Now allows shorthand tab entry of 6-string chords e.g. X02220 (A chord at nut), 8.10.10.9.8.8 (C chord at the 8th fret)

-

- jTab diagrams now inherit foreground and background color of the enclosing HTML element

-

- When entering single-string tab, can reference strings by number (1-6) or by note in standard tuning (EAGDBe)

-

- The chord library with fingerings has been extended to cover pretty much all common - and uncommon - chord variants (m, 6, m6, 69, 7, m7, maj7, 7b5, 7#5, m7b5, 7b9, 9, m9, maj9, add9, 13, sus2, sus4, dim, dim7, aug).

-

- It has been integrated with TiddlyWiki: jTabTwiki combines the guitar chord and tab notation power of jTab with the very popular TiddlyWiki single-file wiki software. Together, they allow you to instantly setup a personal guitar tab wiki/notebook. No kidding. And it's free.

-

read more and comment..

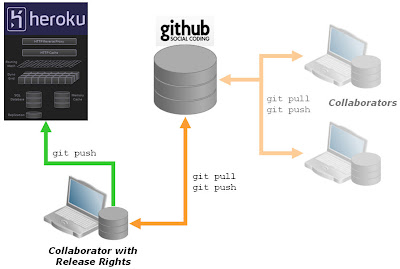

Rails dev pattern: collaborate on github, deploy to heroku

Heroku is an awesome no-fuss hosting service for rails applications (I think I've raved about it enough).

It works great for solo development. But what if you want a large team work on the app, while limiting production

deployment privileges? Or if you want the application to run as an open source project?

Since git is core infrastructure for heroku, it actually makes setting up distributed source control trivial, like in

the diagram:

Here's a simple pattern for setting up this way. It may fall into the special category of "the bleeding obvious" if you

are an experienced git user. But many of us aren't;-)

First, I'm assuming you have a rails application in a local git repository to start with. Like this:

$ rails test

$ cd test

$ git init

$ git add .

$ git commit -m "initial check-in"

Next, you want to create a new, empty repository on github. Github will give you a clone URL for the new repo, like

"git@github.com:mygitname/test.git".

Now we can add the github repo as a new remote location, allowing us to push/pull from github. I'm going to name the destination "github":

$ git remote add github git@github.com:mygitname/test.git

$ git push github master

Enter passphrase for key '/home/myhome/Security/ssh/id_rsa':

Counting objects: 3, done.

Writing objects: 100% (3/3), 209 bytes, done.

Total 3 (delta 0), reused 0 (delta 0)

To git@github.com:mygitname/test.git

* [new branch] master -> master

At this point, you are setup to work locally and also collaborate with other's via github. If you have a paid account on github, you can make this a private/secure collaboration, otherwise it will be open to all.

Next, we want to add the application to heroku. I'm assuming you are already registered on heroku and have the heroku gem setup. Creating the heroku app is a one-liner:

$ heroku create test

Created http://test.heroku.com/ | git@heroku.com:test.git

Git remote heroku added

$

You can see that this has added a new remote called "heroku", to which I can now push my app:

$ git push heroku master

Enter passphrase for key '/home/myhome/Security/ssh/id_rsa':

Counting objects: 29, done.

Delta compression using up to 2 threads.

Compressing objects: 100% (17/17), done.

Writing objects: 100% (17/17), 2.17 KiB, done.

Total 17 (delta 12), reused 0 (delta 0)

-----> Heroku receiving push

-----> Rails app detected

Compiled slug size is 208K

-----> Launching....... done

http://test.heroku.com deployed to Heroku

To git@heroku.com:test.git

4429990..4975a77 master -> master

So we are done! I can push/pull from the remote "github" to update the master source collection, and I can push/pull to the remote "heroku" to control what is deployed in production.

Sweet!

PS: Once you are comfortable with this, you might want to get a bit more sophisticated with branching between environments. Thomas Balthazar's "Deploying multiple environments on Heroku (while still hosting code on Github)" is a good post to help.

read more and comment..

Launched: I Tweet My Way - Getting things done for the twitter generation

I Tweet My Way is a twitter application to help you

to set goals and get things done with the support of your friends and followers.

I Tweet My Way is a twitter application to help you

to set goals and get things done with the support of your friends and followers.

It's an application I've had in stealth for a while, but decided it is about time to let it out in the wild.

Do you have a goal you really want to work on? Quitting smoking, losing weight, paying off the credit card, or learning

a new skill - these (and anything else you can imagine) are all suitable objectives to set yourself with I Tweet My Way.

I've had a long-standing interest in goal setting and tracking, but I must admit it was the advent of the

"twitter-application" fad that got me thinking about how you could do a "getting things done" style personal trainer

with Twitter. Now I'm looking forward to see how it gets used for real. I'm very interested in any feedback you may

have. Did it help? Does it work? Why didn't it help or fit your needs?

Technically, it was built with rails and uses the Twitter OAuth support for authentication (you can read more about that here). I have it hosted at heroku (my favourite rails hosting service, although I am a bit leary about performance in the

Asian region at the moment).

NB: the site currently comes without soundtrack, but think "mbube,

the lion sleeps tonight";-)

read more and comment..

KISSWorld - applying good design to mundane matters

Must be at least two years ago that Singapore Airlines changed the layout of

their KrisWorld inflight entertainment magazine and it has bugged me ever since. The update coincided with a revamp of

the entertainment on offer (a staggering 80 movies and hundreds of CDs). Unfortunately, the magazine suffered.

I've been waiting for SIA to "fix" KrisWorld, but last I flew it was still the same. Maybe one day. Do let me know if

you see a new layout on any of their flights!

But it had me thinking, and I thought worth discussing because it seems a good example of how marketing-driven design changes can have unintended usability consequences despite everyone's best

intentions.

Don't get me wrong, SIA remains my favourite airline of all, but it is disheartening to see that even the best airline

in the world is susceptible to getting stuck with "bad design". Makes you wonder if there is any hope for the rest of

us.

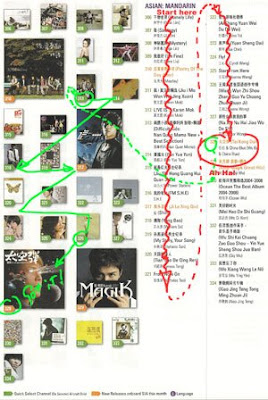

My gripe is with the layout of the CD selections.

How do you select an album you might want to listen to?

- You might recognise the album cover

- Maybe you like certain artists, but not know the specific albums available

- Or you might be looking for a certain album title

- And for some, you don't recognise the album art, title or artist but are attracted to sample it because of the genre or the cover

When looking through a long list of albums, chances are that all of these methods of recognition and selection are at play.

The trouble with KrisWorld is that they have separated the album cover display from the listing of artist and album name. The only thing that links them is the artificial numeric code that is applied to each.

On the left is an

approximation of my actual scan pattern when trying to make a selection.

On the left is an

approximation of my actual scan pattern when trying to make a selection.First I scan the album covers. Many I don't recognise and skip over.

I find something I think I recognise. To be sure, I then cross-reference into the album list and start another search using the special code number.

At this point I'm wondering if eye exercises are a safety feature designed to prevent DVT, or just intended to make the flight pass more quickly.

Maybe Joanne Wang is a little

too sedate for how I'm feeling now, so I start another search through the album/artist list.

Maybe Joanne Wang is a little

too sedate for how I'm feeling now, so I start another search through the album/artist list.Down we go. Some I recognise (but without the album cover I'm not 100% sure).

Ahah, Wu Bai. That's more like it. But which album is this? Cripes, time to find the matching album cover to make sure.

Finally. Time to listen. A good thing this is CD and not a movie, because my eyes need a rest now..

Why do I need to work so hard? How to solve this usability nightmare?

Well, one suggestion is to just keep it simple. Cover art, album title, and artist are bits of information that both separately and in combination help me search the listings the most effective way. So just put it all together in the list. For example:

The eliminates all cross-referenced look-ups, is simple and direct, and does not require significantly more space. Best of all, as a "user" it is effortless.

Funny ... isn't this exactly how the layout used to be designed?

The lesson? Sometimes, designs must be seen to change for marketing or other business reasons, letting you loose in a requirements vacuum. The danger is that in the absence of specific functional or usability needs, other factors such as aesthetics and branding will expand to fill the void. Done carelessly, you can inflict untold collateral damage on the product through the process.

The solution? Consciously re-introduce at least a usability/functional benchmark into the design process - "be no worse than it was before". Better yet, ensure usability improvements remain a key objective - no matter how good you might think it was before, perfection is always one better.

And yes, usability applies as much to the printed page as it does to the web!

read more and comment..