Line Rider - jump the shark

I've had fun playing around with line rider before ... but this is

something else!

read more and comment..

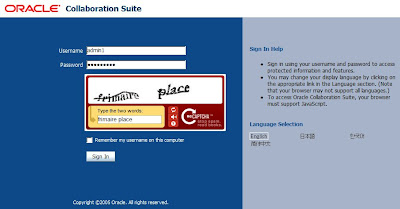

Adding reCAPTCHA to Oracle SSO

I've blogged previously about playing with the reCAPTCHA service in Perl. Seriously cool! Not because it's foolproof - it isn't - but the

side-effect of helping to digitize old documents and books is a truely great idea.

I'm starting to see reCAPTCHA more often now. Bex Huff put it in his comment form, and

blogged about it (though I can't find his posting anymore. Update: link from Bex, thanks!). But I haven't seen it used with

Oracle SSO yet ... sounds like an interesting weekend project!

So I had a poke around, and like to share the solution. Although I am going to integrate the recaptcha service, you

could use the same approach to add any 2nd or 3rd factor to the SSO authentication process. End result is the reCAPTCHA

appearing and working in the Oracle SSO login page. The sample here is based on the Oracle Collaboration Suite 10g

branding:

The sources for my example are available as OssoRecaptcha-1.0-src.zip.

See readme.txt in the zip for more detailed instructions and discussion.

There are basically two things we need to take care of to integrate reCAPTCHA. First, customise the login page to

render the captcha challenge. Secondly, we need to insert a custom authenticator to handle the captcha validation

before the standard authentication.

I've used the ReCaptcha Java Library released by Tanesha

Networks to simplify things.

Customising the Login Page

This is the simplest part, and pretty well documented in "Creating deployment-specific

pages".

The following code renders the captcha challenge and just needs to be included in the login page at an appropriate

point.

<%RecaptchaConf is a class included in the sample to hold your site-specific reCAPTCHA keys that you can easily get by registering at http://recaptcha.org.

// create recaptcha

ReCaptcha captcha = ReCaptchaFactory.newReCaptcha(RecaptchaConf.RECAPTCHA_PUBLIC_KEY, RecaptchaConf.RECAPTCHA_PRIVATE_KEY, false);

String captchaScript = captcha.createRecaptchaHtml(request.getParameter("error"), null);

out.print(captchaScript);

%>

Customising SSO Authentication

We have a simple task: intercept and evaluate the catpcha response before allowing standard SSO authentiation to proceed. Simple, yet not exactly documented unfortunately. The documentation for "Integrating with Third-Party Access Management Systems" is almost what we need to do, but not quite.

The approach I have taken is to sub-class the standard authenticator (oracle.security.sso.server.auth.SSOServerAuth) rather than just implement an IPASAuthInterface plug-in.

The only method of significance is "authenticate", where if the captcha response is present, we evaluate it prior to handing off to the standard authentication.

public IPASUserInfo authenticate(HttpServletRequest request)A couple of things to note:

throws IPASAuthException, IPASInsufficientCredException

{

SSODebug.print(SSODebug.INFO, "Processing OssoRecaptchaAuthenticator.authenticate for " + request.getRemoteAddr());

if (request.getParameter("recaptcha_challenge_field") == null) {

throw new IPASInsufficientCredException("");

} else {

// create recaptcha and test response before calling auth chain

ReCaptcha captcha = ReCaptchaFactory.newReCaptcha(RecaptchaConf.RECAPTCHA_PUBLIC_KEY, RecaptchaConf.RECAPTCHA_PRIVATE_KEY, false);

ReCaptchaResponse captcharesp = captcha.checkAnswer(request.getRemoteAddr(),

request.getParameter("recaptcha_challenge_field"),

request.getParameter("recaptcha_response_field"));

SSODebug.print(SSODebug.INFO, "ReCaptcha response errors = " + captcharesp.getErrorMessage());

if (!captcharesp.isValid()) {

throw new IPASAuthException(captcharesp.getErrorMessage());

}

return super.authenticate(request);

}

}

-

- This method is first called prior to the login challenge to see if you are already authenticated, hence the check for a captcha response before boldly going ahead to authenticate

- The specific exception messages raised in this class seem to get "lost" by the time the handler returns to the login page (at which point you always seem to have a generic failure message). In other words, users will basically just get told to try again. I haven't found a way around this yet.

- See the example usage of SSODebug to log messages which will appear in the SSO log (as configured in ORACLE_HOME/sso/conf/policy.properties)

- We'll deploy the custom class into the OC4J_SECURITY container, rather than to $ORACLE_HOME/sso/plugins since it seems plugins get a limited environment that does not include all of the required support classes. Deploying to OC4J_SECURITY avoids this problem.

Deployment

The most robust approach to deployment is to explode, modify and the rebuild the OC4J_SECURITY EAR file ($ORACLE_HOME/sso/lib/ossosvr.ear) once you are confident everything is working fine. I haven't covered how you do that here however.

Rather, I'm deploying the sample directly into an existing OC4J_SECURITY container. Note that with this approach, if you ever redeploy the OC4J_SECURITY application (which can happen during an upgrade or patch for example), then your changes

would be destroyed.

There's an Ant build script included in the sample that takes care of the details, but is pretty straightforward...

Firstly, two copy operations:

- Copy the login page to $ORACLE_HOME/j2ee/OC4J_SECURITY/applications/sso/web/

- Copy the supporting jar files to $ORACLE_HOME/j2ee/OC4J_SECURITY/applications/sso/web/WEB-INF/lib/

MediumSecurity_AuthPlugin = oracle.security.sso.server.auth.SSOServerAuthFinally, we are ready to restart the OC4J_SECURITY container

# replaced with:

MediumSecurity_AuthPlugin = com.urion.captcha.OssoRecaptchaAuthenticator

opmnctl restartproc process-type=OC4J_SECURITYand test out the customised login. Try...

http://you.site:port/oiddasGive it a go! Love to hear from anyone who deploys reCAPTCHA on a production Oracle Portal or Applications site for example.

Postscript: Patrick Wolf obviously had a weekend free also, and has now posted a solution for adding reCATPCHA to APEX ;-) Cool!

Postscript 2008-06-03: I finally got around to setting this up with its own sourceforge project.

read more and comment..

A Different Point of View

The Jedi are terrorists, and the Star Wars saga just pulp propaganda undermining the Empire's sworn duty to

protect peace and prosperity through the galaxy. Regular citizens just want to get on with life, but will the

rebels let them? Hell no!

The Jedi are terrorists, and the Star Wars saga just pulp propaganda undermining the Empire's sworn duty to

protect peace and prosperity through the galaxy. Regular citizens just want to get on with life, but will the

rebels let them? Hell no!

That is a different point of view;-)

This podcast series, presented from the point of view of standtrooper TD-0013, is a classic. If you've seen the

Star Wars movies, prepare to be shocked into realising that Lucas just got totally sucked in by flimsy lies of

the Rebel Alliance.

I found this at podiobooks [now scribl]

where you can subscribe to the series RSS feed for your iPod or whatever, or checkout the author's site (now archived). Highly

recommended if you grew up with Luke Skywalker and Princess Leia.

read more and comment..

Hunter Killer

Patrick Robinson's

Hunter Killer

is another bomb-the-bastards ripping yarn. He excels in

creating dire yet plausible scenarios of truly global impact. The background to this story is a revolution in

Saudi Arabia, backed by the French.

Forget about political correctness, and you can enjoy the story. It is somewhat predictable plot however, and

lacks a strong sense of suspense. I'd previously read Scimitar SL-2 which shares

many of the same charaters (set 2 years earlier), and I thought overall a better read because it plays more on

suspense. (In that story, nukes are in the hands of terrorists. It's a race to see if they can be prevented

from using them to trigger a major earthquake and tidal wave).

Hunter Killer does provoke some interesting thoughts, if you can see beyond the gung ho

antics.

Firstly, the complicity of France does bring you two question some entrenched and largely invisible prejudices.

Bomb Bagdhad? Sure, civilian casualties are unfortunate but can't be helped in our fight against the regime.

Bomb Paris, despite clear evidence that France is acting as a renegade state? We-ell, lets think about that a

bit. Surely another solution is possible?

Second, there's a fairly sympathetic treatment of the Saudi revolution. EM Forster's quote quickly becomes a

key theme underpinning actions on both sides of the Atlantic:

"If I was asked to choose whether to betray my country or my friend, I hope I'd have the courage to choose my country."Verdict: damn good airport read!

read more and comment..